This post is a bit technical, as I find some of the experience from the migration/upgrade fairly interesting and worth a short blog post.

We have successfully upgraded the database and migrated KeyChest to an HA database cluster. We have been using MySQL as a database system and while we did an upgrade over three major versions, we managed to complete the whole process in about 13 hours – one can say it was a busy Sunday.

Quite a bit of planning in advance paid off as the whole process was relatively smooth, with just a couple of unexpected hiccups at the very end.

The whole migration was split into several steps, so that we would always be able to rollback to a previous consistent operational state without any loss of user data.

- Test of database upgrade – we stopped the production system, just for the duration of copying of database files to a new server. In the new location, we did 3 steps of the database upgrade from version 5.5 to 5.6, 5.7, and 8.0. This turned out to be relatively easy with the upgrade from 5.6 to 5.7 taking the most time as the database was re-built.

- We re-tested creation of an HA cluster, which we had proven to work a couple of weeks back. We copied across the raw database files, changed the UUID to a new server and created a cluster using the new MySQL 8.0 Shell. The main issue was to add new servers to a cluster. By experimenting, we found that the easiest approach is to create a test cluster on the new server, which we then dissolved and made sure that the database is reset to avoid problems with “runaway transactions”.

- The actual migration followed and it consisted of three tasks: a final database migration – a repetition of steps 1 and 2, migration of the server to a new virtual network (VPC), and re-configuration of the KeyChest application to use the new database. The production system was offline for maintenance throughout.

- The final step was re-configuration of DNS records and adding a 302 redirect to the old server to get round cached DNS records with the old IP address.

- A follow-up monitoring showed a couple of issues – resulting from human errors, but the system seems to be running smoothly now.

Overall a success, with the first phase of the migration done, we can now focus on the second and final phase, which is an upgrade to a new version. This and any further changes can now be done without downtime thanks to the HA database configuration.

The database migration was the main factor in the overall time we needed. Whilst we briefly played with an SQL – based dump of the production database, this method quickly turned out to be impractical, as imports into a new database would take over a week in Amazon’s AWS. And if you think we just need more processors, the fact is that the CPU was not the bottleneck – the real problem is access to the disk.

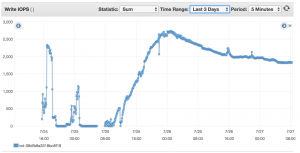

While it’s quite easy to scale the number of processors, disk access is not so flexible. There are a number of posts on this topic, but AWS basically limits 2 main parameters for disk access:

- Throughput – the amount of data written to a disk – KB/s; and

- Number of operations – input/output operations – IO/s.

The latter scales with the size of your disk volume and you get 3 IO/s for each gigabyte, with the lower threshold set at 100 IO/s. So 10GB disk will give you the same number of operations, 100 IO/s as 30GB disk.

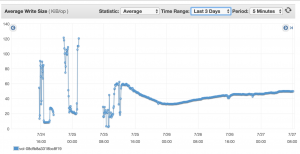

Throughput is pretty much constant and it should be between 90 – 160 MB/s, we experienced 91MB/s.

Which of the limits your applications reach depends on the size of blocks the applications write/read from disk. The following charts show how MySQL behaved with one of our applications – the ultimate limit was the data throughput of 90GB/s as we haven’t reached the IO/s limit at any time. (The interesting part is the right half of the charts.)

The disk speed seems to be the main constraint we have encountered when working with MySQL and larger databases. 90GB/s is still a relatively fast access, but MySQL repeatedly updates same blocks – e.g., index tables, so the actual number of rows inserted into your tables is much smaller than one would expect.

One thought on “Expanding KeyChest For Increasing Usage”