Still at Cambridge Uni, Vashek Matyas and myself looked at the definition of privacy in the Common Criteria standard. With Snowden and Echelon some years later, our definitions of unlikable anonymity and pseudonymity make even more sense.

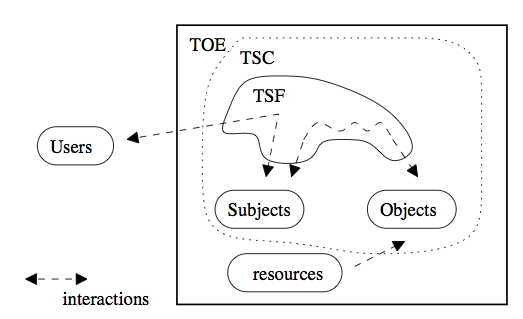

The Common Criteria standard defines four different qualities of privacy for users (see the image below showing how users interact with an evaluated system.

Unobservability – ensures that a user may use a resource or service without others, especially third parties, being aware that the resource or service is being used.

Systems providing unobservability should prevent users obtaining any information about other users’ communications, access to and use of a resource or service, etc. Several countries, e.g. Germany, consider the assurance of communication unobservability as an essential part of the protection of constitutional rights. Threats of malicious observations (e.g., through Trojan Horses) and traffic analysis (other than by communicating parties) are best-known examples.

Anonymity – ensures that a user may use a resource or service without disclosing their identity. The property provides protection of the user identity (see the picture above), not the identity of the subject.

This property makes it very difficult to establish trust between the system and users as users remain anonymous. Possible applications include confidential enquiries to public databases. A protected asset is usually the identity of the requesting entity, but can also include information on the kind of requested operation (and/or information) and aspects such as time and mode of use.

Unlinkability – ensures that a user may make multiple uses of resources or services without others being able to link these uses together.

The protected assets are the same as in Anonymity. Relevant threats can also be classed as “usage profiling”.

Pseudonymity – ensures that a user may use a resource or service without disclosing their identity, but can still be accountable for that use.

Possible applications are usage and charging for phone services without disclosing identity, “anonymous” use of an electronic payment, etc. In addition to the Anonymity services, Pseudonymity provides methods for authorisation without identification (at all or directly to the resource or service provider).

There are several problems with those definitions. The first issue is that none of the properties can be implemented totally. Recent research into privacy systems showed that any interaction with a security system leaks information about users. We have suggested methods for quantification of privacy in line with the research to compare and quantify different privacy systems.

The second issue covers interesting effects of linkability on other properties, i.e. user profiling, on privacy. This means that the four properties of privacy are not completely orthogonal but may infuence each other. The main threat is an ability of attackers to collect data about users in the long-term and subsequently use stastical methods to de-anonymise users.

Unlinkable pseudonymity – semantics of this property is built on an assumption that knowledge of several pieces of mutually related information is much more powerful than knowledge of just one piece of such information. When compared with the previous definition of unlinkable pseudonymity, the definition is now concerned with a property ensuring that there is no increase in the probability of correct identification of a given user when more information is available. The same reasoning lies behind the following definition of unlinkable anonymity.

Unlinkable anonymity – An attack exploiting weak unlinkability may degrade anonymity provided by the system. Basically, unlinkability should ensure that a particular implementation does not contain side-channels that could be used when several service invocations appear.