First and foremost, I want to apologize to all users of our KeyChest service, who have been impacted by a recent incident. It caused a service interruption with lasted around 8 hours.We also lost around 40% of the KeyChest production database.

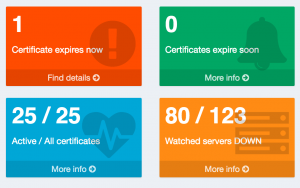

We have been developing KeyChest (as run at https://keychest.net) as we saw an interest in this kind of service. We started the project to manage our own certificates, but it attracted over 1,000 users over the few months’ of its existence as a free cloud service. While we kept adding features and focused more on building the KeyChest certificate renewal system, we forgot about the basics as we didn’t properly realize how big it became – there is something in that story about a frog and hot water.

I’m not trying to provide an excuse – there’s hardly any, just an explanation. All I can say is that we learned a lesson the hard way. All bad things have a silver lining, for us, it was a moment to pause, think, and decide what we really want to do about. We decided to show that we can learn and get better. We decided to strengthen KeyChest and make it a dependable, high-availability service. We simply believe that one such lesson is one too many even for a free service.

Post-Mortem

As part of a system upgrade for user roles, we accidentally deleted the production database storing user accounts – in simple terms, too many terminal windows on one laptop.

We panicked and scrambled to figure out what, why, how. We quickly realized that the latest suitable backup contained only about 60% of user accounts. We eventually recovered the service using this backup and started looking for any traces that would help us notify other users who now depend on KeyChest. Unfortunately, as we keep the production data separate from any test and development instances, and do not use or copy the data anywhere else, we could find very little.

While still in the panic mode, we made some further unenforced mistakes. For example, we didn’t make a snapshot of the EC2 server as a matter of urgency to preserve its state. Instead, we recovered the database and in the process deleted any remaining data that may have been left and used to lower the impact. I’m not sure it would be possible, but it was out of the question afterward. (I personally found surprising that the panic and a sudden pressure can force excellent developers into making mistakes they would laugh at otherwise.)

Way Forward

We have made a list of measures to protect us against any future disasters. We discussed a number of things and eventually agreed to implement the following:

- Adjust privileges for database access – disable “catastrophic” commands, add per table logging – STATUS: being deployed.

- Backup of the database – automated daily database backups stored on a dedicated server. STATUS: completed.

- Snapshots of EC2 – a daily snapshot is now taken automatically and we will keep up to 2 weeks of snapshots. STATUS: completed.

- Public monitoring of up times, incidents, and any access to prod servers – https://keychest.status.io now shows each remote access to the production server. STATUS: completed.

- Create an HA cluster – the plan is to create another server located in Europe and eventually a third one in Australia / South-East Asia, they will use a database cluster. STATUS: planned.

- Review exports of certificates and active domains from user accounts to allow users back up their configuration. STATUS: in progress.

We have already implemented most of these actions. The big one is the HA cluster, which will require a small proof-of-concept first. If all goes well, we will be able to monitor servers as identified by DNS servers in 2 or 3 of the geographic regions (providing a better coverage of Geo DNS / DNS anycast).

While we try to turn this disaster into something positive, we can’t replace the time and effort KeyChest users put into their accounts.

Please allow me, once more, apologize to all KeyChest users for the inconvenience or worse.